Daily Drop (1295)

05-11-26

Monday, May 11, 2026 // (IG): BB // Ghostwire

Persistent Drone Surveillance Is Killing the Battlefield “Golden Hour” in Ukraine

Bottom Line Up Front (BLUF): A former British Army and Ukrainian reconnaissance operator argues that persistent drone surveillance and near-instant fires integration have fundamentally broken Western battlefield casualty evacuation doctrine. On the modern battlefield, casualty evacuation is no longer primarily a medical problem—it is a high-risk tactical event where movement itself attracts lethal targeting.

Analyst Comments: Western militaries spent two decades operating under assumptions built during the Global War on Terror: air superiority, uncontested logistics, protected medevac corridors, and rapid CASEVAC timelines. Ukraine is demonstrating that those assumptions collapse in a peer conflict where every movement generates a detectable signature. The key insight here is that the “golden hour” is not failing because medical capability declined. It is failing because the battlefield has become transparent. Persistent drone observation means casualty extraction itself becomes targetable. A wounded soldier no longer represents one casualty event—he becomes bait for follow-on strikes against stretcher teams, medics, evacuation routes, and reinforcement efforts.

READ THE STORY: War on the Rocks

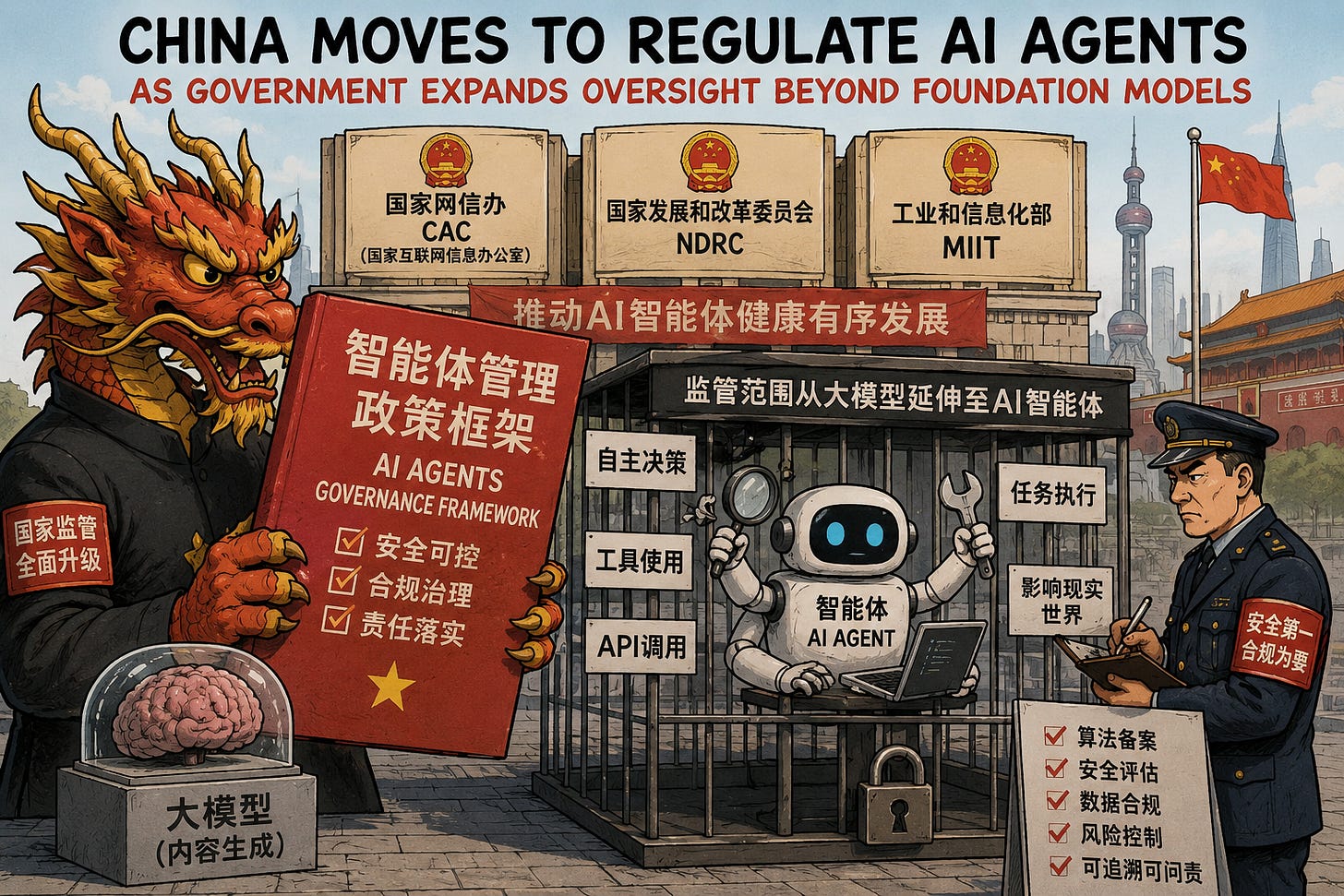

China Moves to Regulate AI Agents as Government Expands Oversight Beyond Foundation Models

Bottom Line Up Front (BLUF): China’s Cyberspace Administration, along with the NDRC and Ministry of Industry and Information Technology, released a new policy framework establishing governance, security, and compliance requirements for AI agents (“智能体”). The guidance shifts regulatory focus from content-generation models toward autonomous systems capable of decision-making, tool use, API interaction, and real-world task execution.

Analyst Comments: This is one of the clearest signals yet that governments are beginning to treat AI agents as operational actors rather than just software products. The important distinction in the policy is the move from “content governance” to “behavior governance.” Regulators are no longer only concerned with hallucinations or harmful outputs—they are preparing for systems that can autonomously execute workflows, access databases, trigger transactions, control devices, and interact with other agents. The language around “behavior fencing,” permission boundaries, auditability, and traceability mirrors the exact concerns security researchers have been raising around MCP servers, autonomous tool use, memory poisoning, prompt injection, and agentic privilege escalation. In practice, China is trying to formalize security controls for autonomous systems before large-scale enterprise deployment fully takes off.

READ THE STORY: Freebuf

True Sovereign Cloud Is Impossible Outside the US or China

Bottom Line Up Front (BLUF): Gartner analysts warned that fully sovereign cloud infrastructure is effectively unattainable outside the United States or China because only those countries control the complete technology stack required to operate truly independent cloud environments. The assessment comes amid growing European concerns over geopolitical dependence on US hyperscalers and the lack of viable cloud exit strategies.

Analyst Comments: “Sovereign cloud” has become one of the most overloaded terms in enterprise IT, often marketed as data residency plus contractual assurances. Gartner is pointing out that real sovereignty means control over infrastructure, hardware supply chains, software stacks, operational dependencies, legal jurisdiction, and service continuity. Very few organizations—or countries—actually have that. The uncomfortable part for Europe is that many so-called sovereign offerings are still built on top of AWS, Microsoft Azure, or Google Cloud technology. Even “local” deployments like Outposts or Azure Local still depend on upstream vendor control planes, licensing, telemetry, patching, and support channels. As Gartner bluntly put it: they still “phone home.”

READ THE STORY: The Register

Cheap SDR Hardware and Legacy TETRA Weaknesses Expose Critical Infrastructure Risk Worldwide

Bottom Line Up Front (BLUF): A reported disruption affecting Taiwan’s high-speed rail system highlighted how inexpensive software-defined radio (SDR) hardware and replay attacks against legacy TETRA radio infrastructure can threaten critical transportation systems. The incident reportedly involved a university student using commercially available SDR tooling rather than a sophisticated state-backed operation.

Analyst Comments: The uncomfortable takeaway here is not that a student disrupted rail communications—it is that critical infrastructure systems globally are still relying on aging wireless protocols designed for a very different threat environment. TETRA was built in an era when radio interception and signal injection required specialized equipment and deep expertise. SDR platforms like HackRF have completely destroyed that barrier. This is the broader democratization of electronic warfare. Capabilities that once required nation-state budgets now exist in open-source tooling, cheap hardware, GitHub repositories, and YouTube tutorials. Replay attacks, signal spoofing, protocol fuzzing, RF injection, GPS manipulation, and wireless interception are no longer niche capabilities.

READ THE STORY: The Register

Iran War Complicates Trump-Xi Summit as Beijing Balances Trade, Energy, and Tehran Ties

Bottom Line Up Front (BLUF): President Trump’s upcoming visit to China is expected to be overshadowed by the ongoing Iran conflict, growing instability around the Strait of Hormuz, and Beijing’s economic relationship with Tehran. While both Washington and Beijing are signaling interest in maintaining trade dialogue, the war has introduced new geopolitical leverage points around energy security, sanctions, and regional influence.

Analyst Comments: This trip is shaping up less like a breakthrough summit and more like a high-stakes geopolitical balancing exercise. China needs stability in the Gulf because it remains heavily dependent on Iranian energy flows and broader Middle East shipping lanes. The US, meanwhile, wants Beijing to use its economic leverage over Tehran while simultaneously competing with China on trade, technology, and global influence. The interesting dynamic is that both sides now have overlapping incentives despite broader rivalry. Washington wants pressure on Iran to keep Hormuz open and avoid global economic fallout. Beijing wants to prevent sustained energy disruption that could further slow China’s economy. That creates room for tactical cooperation even while strategic competition remains intact.

READ THE STORY: AP

Trump Rejects Iranian Response to Ceasefire Proposal as Strait of Hormuz Tensions Escalate

Bottom Line Up Front (BLUF): President Trump publicly rejected Iran’s response to a US-backed ceasefire and peace proposal, calling it “totally unacceptable” as clashes around the Strait of Hormuz continue despite a fragile month-old ceasefire. The standoff is increasing fears of broader regional escalation, renewed disruption to global energy markets, and prolonged instability around one of the world’s most critical maritime chokepoints.

Analyst Comments: The biggest risk here is not necessarily immediate full-scale war—it is sustained instability around the Strait of Hormuz becoming the “new normal.” Even limited maritime clashes, tanker strikes, drone activity, and blockade enforcement operations are enough to rattle global energy markets and raise shipping insurance, transit costs, and geopolitical risk calculations. The negotiation posture from both sides suggests they are still trying to avoid uncontrolled escalation while simultaneously maximizing leverage. Iran appears focused on sanctions relief, reopening maritime access, and separating nuclear negotiations from ceasefire terms. The Trump administration, meanwhile, is signaling that any agreement must include broader concessions tied to Iran’s nuclear program and regional military activity.

READ THE STORY: The Washington Post

Token Theft Weaponization: Red Teamers Demonstrate MFA Bypass and Persistent Access Against Microsoft Entra Environments

Bottom Line Up Front (BLUF): A detailed red-team writeup published on FreeBuf outlines how attackers can weaponize token theft, Evilginx phishing, device code abuse, and Microsoft Entra trustType bypasses to gain persistent access to Microsoft 365 cloud environments. The research demonstrates how weak Conditional Access configurations, unmanaged MFA registration flows, and dormant accounts can enable long-term compromise even in Entra ID joined environments.

Analyst Comments: This is less a “new exploit” and more a brutally practical walkthrough of how modern cloud identity attacks actually happen. The writeup chains together well-known offensive tooling—Evilginx, TokenTactics, ROADrecon, GraphRunner, roadtx—and shows how gaps in Conditional Access design can still collapse enterprise defenses. The standout issue is the abuse of trustType logic and device-code authentication flows to sidestep assumptions around “managed device only” access.

READ THE STORY: Freebuf

OpenClaw Malware Campaign Targets Crypto Wallets and Password Managers With Advanced Rust-Based Loader Framework

Bottom Line Up Front (BLUF): Researchers uncovered a malware campaign impersonating the OpenClaw project to distribute a sophisticated Rust-based malware framework targeting cryptocurrency wallets and password managers including MetaMask, Phantom, Ledger Live, and Bitwarden. The malware uses layered anti-analysis techniques, in-memory execution, Telegram-based C2 infrastructure, and multiple persistence mechanisms designed to survive remediation efforts.

Analyst Comments: Pairing Rust with in-memory .NET execution through clroxide is especially notable because it blends native and managed execution paths in a way many defensive products still struggle to inspect cleanly. The targeting strategy also reflects where cybercriminal economics currently sit. Crypto wallets and password managers remain high-value aggregation points for identity, authentication, and direct financial theft. The ability to dynamically update targeted extensions from server-side manifests gives operators long-term flexibility without needing to redeploy binaries.

READ THE STORY: GBhackers

JDownloader Supply Chain Attack Delivers Python RAT to Millions of Potential Victims

Bottom Line Up Front (BLUF): Attackers compromised the official JDownloader website and replaced legitimate installer download links with trojanized packages containing a Python-based remote access trojan (RAT). The malicious installers were available between May 6–7, 2026, potentially exposing millions of Windows and Linux users to persistent compromise through a highly convincing supply chain attack.

Analyst Comments: This is exactly why software supply chain attacks remain one of the most dangerous intrusion vectors. Users did everything “correct” here—they downloaded software from the legitimate vendor website—and still got backdoored. The attackers avoided tampering with JDownloader itself and instead compromised the distribution layer, which is both quieter and harder for users to detect. The malware design also shows a level of maturity increasingly common in modern commodity intrusion tooling. Multi-stage loaders, XOR-encrypted payloads, RC4-protected dead-drop C2 resolution, PyArmor obfuscation, and fallback infrastructure hosted across legitimate platforms all point to operators who expected defenders to reverse engineer the payload quickly and built resilience accordingly.

READ THE STORY: Freebuf

Critical Ollama Flaws Expose AI Server Memory and Enable Persistent Windows Code Execution

Bottom Line Up Front (BLUF): Researchers disclosed multiple critical vulnerabilities in Ollama that could allow attackers to leak sensitive AI process memory remotely and achieve persistent code execution on Windows systems. The most severe flaw, dubbed “Bleeding Llama” (CVE-2026-7482), affects exposed Ollama servers and may expose API keys, prompts, proprietary data, and user conversations through a crafted GGUF model file.

Analyst Comments: Ollama is becoming a high-value target because it often sits close to sensitive AI workflows, developer tools, prompts, API keys, and proprietary data. The dangerous part is that many teams treat local AI infrastructure like harmless dev tooling, then expose it without authentication or proper segmentation. Bleeding Llama is especially bad because it turns model creation into a memory disclosure channel. A crafted GGUF file can make Ollama read past allocated heap memory, potentially exposing secrets from the running process. That could include environment variables, system prompts, user conversations, customer data, and outputs from connected coding or agent tools.

READ THE STORY: THN

AgentBound Introduces Android-Style Permission Controls for MCP Servers and AI Agents

Bottom Line Up Front (BLUF): Experts proposed “AgentBound,” a security framework designed to enforce permission boundaries around MCP (Model Context Protocol) servers used by AI agents. The framework introduces Android-style permission declarations and runtime sandboxing to prevent AI tools from accessing files, networks, environment variables, or system resources beyond explicitly authorized limits.

Analyst Comments: Prompt injection, tool abuse, poisoned MCP servers, and compromised plugins are not edge cases anymore—they are expected operating conditions. The real question is whether the underlying execution environment can contain the blast radius. AgentBound’s biggest contribution is shifting AI security away from “did the model behave correctly?” toward “could the process technically perform the action at all?” That is a much more mature security mindset. If an MCP server never receives permission to read SSH keys or exfiltrate data to arbitrary domains, prompt injection becomes dramatically less dangerous because the execution boundary blocks the action even if the model is tricked.

READ THE STORY: Freebuf

Items of interest

Thousands of Vibe-Coded Apps Expose Corporate and Personal Data on the Open Web

Bottom Line Up Front (BLUF): RedAccess researchers found more than 5,000 publicly accessible AI-generated web apps built with platforms including Lovable, Replit, Base44, and Netlify. Roughly 40% reportedly exposed sensitive personal or corporate data, including medical information, financial records, strategy documents, chatbot logs, and customer details..

Analyst Comments: This is shadow IT with a launch button. The issue is not just buggy AI code; it is non-technical users deploying internet-facing apps without authentication, access controls, or security review. The parallels to exposed S3 buckets are obvious: vendors can blame user configuration, but insecure defaults and weak guardrails become a platform problem at scale. Expect attackers to mine these apps for credentials, customer data, internal documents, admin access, and phishing infrastructure.

READ THE STORY: Wired

Vibe Coding is a Trap (What Senior Devs See That You Don't) (Video)

FROM THE MEDIA: Are you falling for the "vibe coding" trend? In this video, we expose the truth behind vibe coding and reveal why it can be a trap for aspiring developers. You'll learn what is vibe coding, the hidden pitfalls, and what senior devs see that you don't.

The “vibe coding” mind virus explained… (Video)

FROM THE MEDIA: Let’s take a look at the latest craze in the programming world known as "vibe coding". Learn how to vibe code properly and look into the pros and cons of AI coding techniques.

The selected stories cover a broad range of cyber threats and are intended to help readers frame key publicly discussed threats and improve overall situational awareness. InfoDom Securities does not endorse any third-party claims made in its original material or related links on its sites; the opinions expressed by third parties are theirs alone. For further questions, don’t hesitate to get in touch with InfoDom Securities at dominanceinformation@gmail.com.