Daily Drop (1266)

03-25-26

Wednesday, Mar 25, 2026 // (IG): BB // Ghostwire

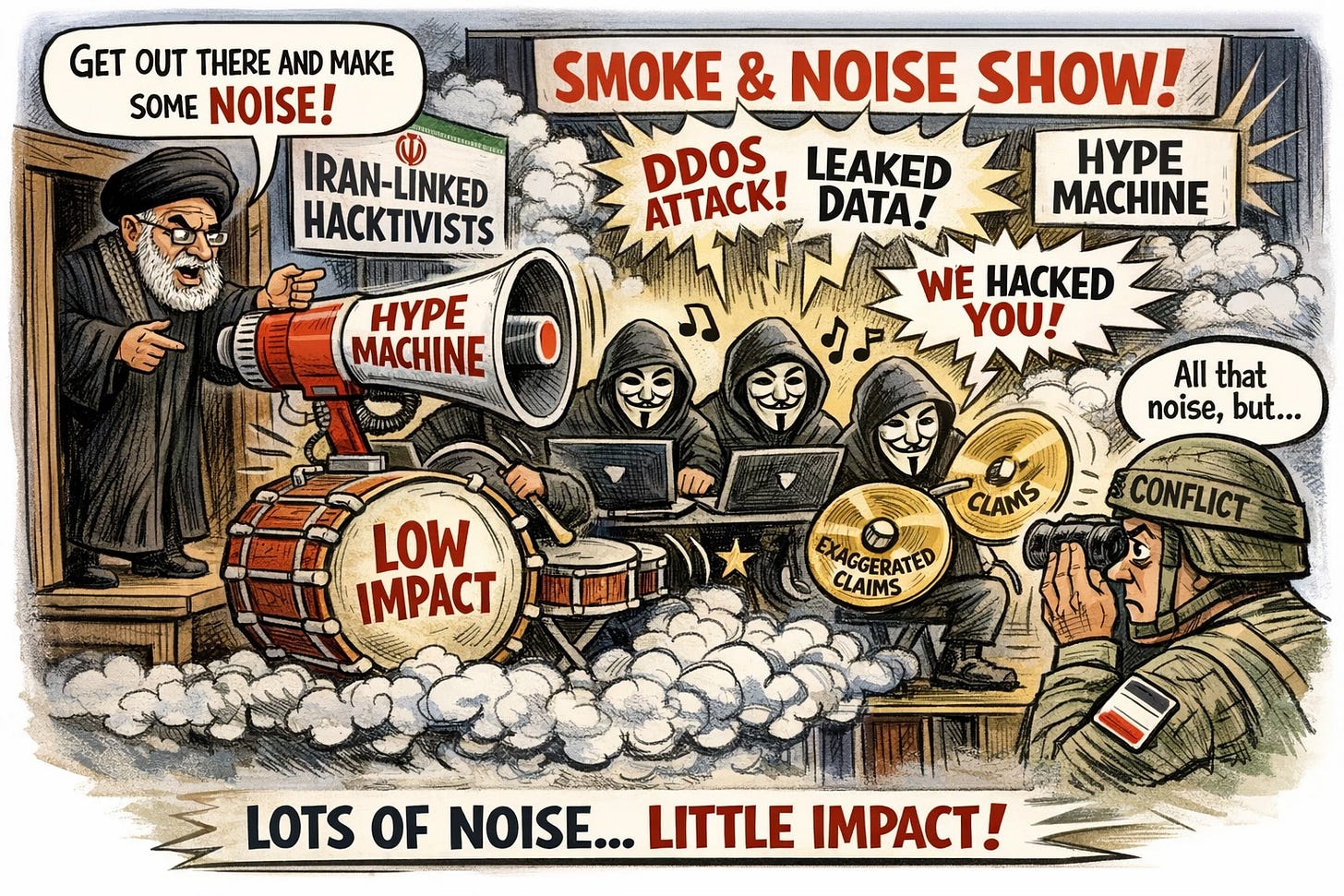

Iran-Linked Hacktivists Drive Noise Over Impact in Ongoing Conflict

Bottom Line Up Front (BLUF): Iran-aligned hacktivist groups have increased cyber activity during the ongoing conflict, but measurable operational impact remains limited. Most campaigns rely on exaggerated claims, minor disruptions, and psychological effects rather than sustained or high-impact cyber operations.

Analyst Comments: The spike in activity is real—phishing campaigns and opportunistic attacks surged—but impact doesn’t match the narrative. Hacktivist groups are inflating claims, targeting softer supply chain entities, and repackaging minor data leaks as major breaches. What matters is intent. These groups aren’t trying to win technically—they’re trying to shape perception. If a company thinks it’s been breached, or if the public believes infrastructure is under attack, that’s already partial success. The “fog of war” applies here. Attribution is messy, verification is slow, and attackers exploit that gap. Even weak attacks can create outsized psychological and reputational effects.

READ THE STORY: DR

AI-Generated Fake Policies Mark Shift to “Cognitive Layer Attacks” in Cybersecurity

Bottom Line Up Front (BLUF): AI-generated fake policy documents are emerging as a new class of “cognitive attacks,” designed to manipulate perception rather than exploit systems. These campaigns leverage high-fidelity AI content and targeted distribution to influence decision-making, signaling a shift from technical compromise to trust and information integrity as primary attack surfaces.

Analyst Comments: This is where cybersecurity starts to blur into information warfare. No exploit chain, no malware—just believable content delivered at scale. The key shift is from system compromise to decision compromise. If attackers can influence how organizations interpret regulation, risk, or urgency, they don’t need access to the network—they can shape outcomes indirectly. What makes this dangerous is the combination of scale and precision. AI can generate thousands of highly realistic “official” documents daily, then push them into the exact audiences most likely to react—compliance teams, executives, policymakers. That’s targeted psychological pressure, not just misinformation.

READ THE STORY: 4Hou

Iran-Linked Attack on Stryker Wipes 200,000 Devices, Disrupts Hospital Operations

Bottom Line Up Front (BLUF): Iranian threat actors wiped over 200,000 devices at medical technology firm Stryker using Microsoft Intune functionality, disrupting operations and impacting hospital services. The attack combined destructive actions with stealthy malware, highlighting the growing risk of identity and management platform abuse in critical infrastructure environments.

Analyst Comments: Using Microsoft Intune’s native wipe capability is a smart move. No exploit needed, no malware propagation required—just valid access to device management. That’s a recurring trend: attackers abusing enterprise tools to achieve destructive impact while blending into normal admin activity. The scale is what stands out. 200,000 devices wiped isn’t just an IT issue—it becomes operational and, in this case, clinical. Hospitals falling back to radios and manual coordination is a real-world impact, not just downtime metrics.

READ THE STORY: The Record

HackerOne Employee Data Breach Linked to Third-Party Vendor Compromise

Bottom Line Up Front (BLUF): HackerOne confirmed a data breach impacting employee information after attackers compromised third-party provider Navia. While core systems remained secure, the incident exposed personal data of 287 individuals—reinforcing that vendor ecosystems remain a primary attack vector.

Analyst Comments: This is a clean example of modern supply chain reality: you can secure your own environment and still lose data through a partner. The attack itself isn’t technically flashy—no zero-days, no novel malware—but it doesn’t need to be. Vendors like Navia sit on sensitive HR and benefits data, which is high-value for identity theft and social engineering. That makes them softer, more attractive targets than hardened primary platforms. The dwell time stands out. Attackers maintained access for weeks before detection. That suggests either weak monitoring on the vendor side or limited visibility into third-party environments—both common problems.

READ THE STORY: CyberPress

LiteLLM Supply Chain Attack Compromises 95M-Download Package, Enables Full Credential Theft and Cloud Takeover

Bottom Line Up Front (BLUF): Attackers compromised the widely used litellm Python package (95M downloads/month), injecting malware that steals credentials, exfiltrates secrets, and enables full cloud and Kubernetes compromise. The attack—linked to TeamPCP—demonstrates an ongoing campaign targeting developer tooling to achieve large-scale supply chain access.

Analyst Comments: Targeting LiteLLM is strategic. It sits in AI pipelines, meaning access to API keys, cloud credentials, and sometimes production workloads. Compromise that layer, and you’re upstream of everything. The .pth trick in version 1.82.8 is particularly nasty. Execution at interpreter startup means you don’t even need to import the package. Just installing it poisons the environment. That bypasses a lot of traditional assumptions about “safe” usage. The Kubernetes angle is where this goes from bad to catastrophic. Automatically deploying privileged pods across clusters? That’s full environment takeover, not just data theft.

READ THE STORY: GBhackers

Critical Flaws in Spring AI and ONNX Expose Data and Enable Model Supply Chain Attacks

Bottom Line Up Front (BLUF): New vulnerabilities in Spring AI and ONNX introduce classic injection risks and model trust bypasses into AI pipelines, enabling data leakage and potential model tampering. The findings reinforce that AI frameworks are now part of the enterprise attack surface and must be treated like production-critical systems—not experimental tooling.

Analyst Comments: What we’re seeing is familiar vulnerabilities—SQL injection, trust bypass—reappearing in a new layer that many organizations haven’t secured properly. Spring AI shows how quickly data exposure becomes a problem. If your LLM integration touches databases, logs, or user histories, injection flaws can turn a chatbot into a data exfiltration interface. Multi-tenant environments make this worse—one user querying another’s data through the model layer.

READ THE STORY: Habr

FCC Bans Foreign-Made Routers Over Supply Chain Risks and Nation-State Exploitation

Bottom Line Up Front (BLUF): The U.S. FCC has banned the sale of new foreign-made consumer routers, citing critical supply chain and cybersecurity risks. The move targets devices frequently exploited by nation-state actors for botnets, espionage, and infrastructure attacks, signaling increased regulatory intervention in hardware security.

Analyst Comments: This is a policy move with real security implications—not just political signaling. Routers have been a soft target for years. Poor patching, weak defaults, and long lifecycles make them ideal for botnets and persistence. What’s different here is the scale of concern: the FCC is treating router supply chains as a national security issue, not just a consumer risk. The reference to groups like Volt Typhoon and Salt Typhoon is key. These actors aren’t just exploiting routers—they’re using them as infrastructure for long-term access and lateral movement into critical systems. That elevates routers from “edge devices” to strategic footholds.

READ THE STORY: THN

Microsoft Azure AI Foundry Introduces Zero-Trust and Model Scanning to Secure Generative AI Supply Chains

Bottom Line Up Front (BLUF): Microsoft is embedding zero-trust principles and proactive model scanning into Azure AI Foundry and Azure OpenAI Service to mitigate risks from malicious or compromised AI models. The approach focuses on isolating customer data, securing model execution environments, and detecting supply chain threats before deployment.

Analyst Comments: The zero-trust angle is expected, but the interesting part is model-level scrutiny. Treating models like software packages—scanning for backdoors, hidden behaviors, and unsafe calls—is exactly where this needs to go. We’ve already seen early signs (e.g., poisoned models, malicious packages in ML ecosystems), so this is a defensive move before it becomes widespread. That said, platform-level scanning isn’t a silver bullet. If organizations blindly trust “verified” models without runtime monitoring, they’re still exposed. AI behavior is probabilistic—malicious functionality doesn’t always trigger during testing.

READ THE STORY: CyberPress

Mobile App Hardening Push Highlights Multi-Platform Security Gaps Across Android, iOS, and HarmonyOS

Bottom Line Up Front (BLUF): A new industry campaign promoting cross-platform app hardening underscores a persistent issue: organizations struggle to secure mobile apps consistently across Android, iOS, and emerging platforms like HarmonyOS NEXT. Attack surfaces such as reverse engineering, tampering, and data leakage remain widespread due to fragmented security controls.

Analyst Comments: Most organizations treat mobile security per platform, not as a unified risk surface. That’s how gaps form. Android gets heavy protection, iOS is assumed “secure by default,” and newer ecosystems like HarmonyOS often lag behind in tooling and scrutiny. Reverse engineering and runtime tampering are still low-bar attacks. If your app logic or API keys live client-side without protection, they will get pulled. The rise of mobile malware, fraud apps, and API abuse is tied directly to weak app hardening. The multi-platform angle matters more now. Businesses are expanding into new ecosystems (especially HarmonyOS in certain markets), but security maturity isn’t keeping up. Attackers will always go after the weakest platform in the stack.

READ THE STORY: 4Hou

Google Passkey Architecture Introduces New Attack Surface in “Passwordless” Ecosystem

Bottom Line Up Front (BLUF): Google’s passkey implementation relies on a hybrid cloud-device architecture that centralizes trust in a cloud authenticator, introducing new potential attack paths. While maintaining strong cryptographic guarantees, the design shifts risk toward device enrollment, recovery flows, and cloud control planes—creating opportunities for account takeover without breaking core WebAuthn standards.

Analyst Comments: The industry narrative around passkeys is “phishing-resistant, hardware-backed, problem solved.” In reality, implementations matter more than standards. Google’s design is solid cryptographically, but it introduces a complex trust chain: TPM → OS → browser → cloud authenticator → sync infrastructure. That’s a lot of moving parts. The weak points aren’t the keys—they’re the glue. Device onboarding, recovery (PIN, account sync), and cloud-side orchestration are where attackers will focus. If you can hijack a device into the “security domain” or abuse recovery flows, you don’t need to break FIDO—you just get legitimate assertions.

READ THE STORY: GBhacker

Kali Linux 2026.1 Release Adds New Offensive Tools and Expands Mobile Pentesting Capabilities

Bottom Line Up Front (BLUF): Kali Linux 2026.1 introduces eight new offensive security tools, kernel upgrades, and enhanced mobile penetration testing features. The release reflects continued investment in adversarial emulation and real-world attack simulation capabilities, particularly across cloud, web, and mobile environments.

Analyst Comments: Kali releases are always incremental—but the tool selection tells you where offensive tradecraft is heading. This update leans heavily into adversarial emulation (Atomic-Operator, AdaptixC2) and modern web attack surfaces (SSTI, XSS, WordPress enumeration). That aligns with what defenders are actually seeing: web apps and cloud services are still the primary battleground. The mobile improvements are more interesting than they look. Expanding wireless injection and SDR-like capabilities on consumer devices lowers the barrier to entry for physical-layer and proximity attacks. You don’t need specialized hardware anymore—just the right phone and kernel.

READ THE STORY: GBhackers

Spur Enhances IP Intelligence to Detect AI and Anonymized Traffic in Real Time

Bottom Line Up Front (BLUF): Spur has upgraded its IP intelligence platform to identify AI-generated traffic and anonymized infrastructure (VPNs, proxies, data centers) in real time. The enhancements enable security teams to make dynamic access decisions, improving fraud detection and reducing abuse tied to automated and masked activity.

Analyst Comments: Traditional IP reputation (good/bad, geo, ASN) isn’t enough anymore. Attackers aren’t just hiding behind VPNs—they’re increasingly using AI infrastructure itself to generate traffic. That blurs the line between legitimate automation and abuse. The AI service tagging is the standout feature. Being able to identify traffic coming from OpenAI, Anthropic, etc., gives defenders context—but it also creates a new challenge. Not all AI-origin traffic is malicious. Block too aggressively and you break legitimate integrations; allow too much and bots walk right in.

READ THE STORY: HNS

Items of interest

AI Coding Assistants Introduce New Client-Side Attack Surface, Undermining Endpoint Security

Bottom Line Up Front (BLUF): AI coding tools like Codex, Claude Code, and Gemini are creating a new class of client-side threats by operating with high privileges on developer endpoints. Attackers can exploit configuration files, plugins, and automation features to execute malicious actions—effectively bypassing traditional endpoint defenses.

Analyst Comments: For years, security teams hardened endpoints, moved workloads to the cloud, and reduced local execution risk. AI coding agents just reversed that trend. They need deep local access—filesystems, configs, credentials—so developers grant it. That’s the hole. The real problem isn’t just vulnerabilities—it’s trust. These agents are treated like helpful assistants, not execution engines. But under the hood, they’re running commands, parsing configs, and connecting to services with minimal visibility. The config file angle is especially concerning. We’ve spent decades teaching people not to run unknown binaries—but now a .env or .toml file can trigger execution through an AI agent. That’s a mental model gap attackers will exploit hard.

READ THE STORY: DR

Spec-Driven Development: AI Assisted Coding Explained (Video)

FROM THE MEDIA: Is AI-assisted coding the future? Cedric Clyburn explores spec-driven development, a game-changing approach that combines LLMs with software development best practices. Learn how it differs from vibe coding, integrates SDLC principles, and improves coding workflows with requirements-driven precision.

rom Free to $300 the best options for AI Coding (Video)

FROM THE MEDIA: The best AI coding tools at each tier from FREE to $300.

The selected stories cover a broad range of cyber threats and are intended to help readers frame key publicly discussed threats and improve overall situational awareness. InfoDom Securities does not endorse any third-party claims made in its original material or related links on its sites; the opinions expressed by third parties are theirs alone. For further questions, don't hesitate to get in touch with InfoDom Securities at dominanceinformation@gmail.com.